JUMP TO TOPIC

The derivative of the sigmoid function is $ \frac{d \sigma(x)}{dx} = \sigma(x) \cdot (1 – \sigma(x)) $.

The derivative of the sigmoid function is a fundamental concept in machine learning and deep learning, particularly within the context of neural networks.

As an activation function, the sigmoid function denoted as $\sigma(x) = \frac{1}{1+e^{-x}}$, introduces non-linearity into neural network models, helping them to learn complex patterns during training.

Importantly, the derivative of this function expressed as $\sigma'(x) = \sigma(x)(1-\sigma(x))$, plays a crucial role in the backpropagation algorithm, which is used to update the weights of the network in response to errors.

My understanding is that comprehending how this derivative is calculated and applied allows for the optimization of neural networks, ensuring better performance and accuracy in tasks such as image and speech recognition, natural language processing, and more.

What excites me is how this seemingly simple mathematical concept helps to unravel the intricate complexities of data and the real world.

Derivative of the Sigmoid Function

In exploring the sigmoid function, understanding its derivative is crucial for implementing learning algorithms in neural networks. It’s the foundation for the training process in many machine learning models.

Mathematical Derivation

The sigmoid function, sometimes known as the logistic function, is given by the equation:

$$ \sigma(x) = \frac{1}{1 + e^{-x}} $$

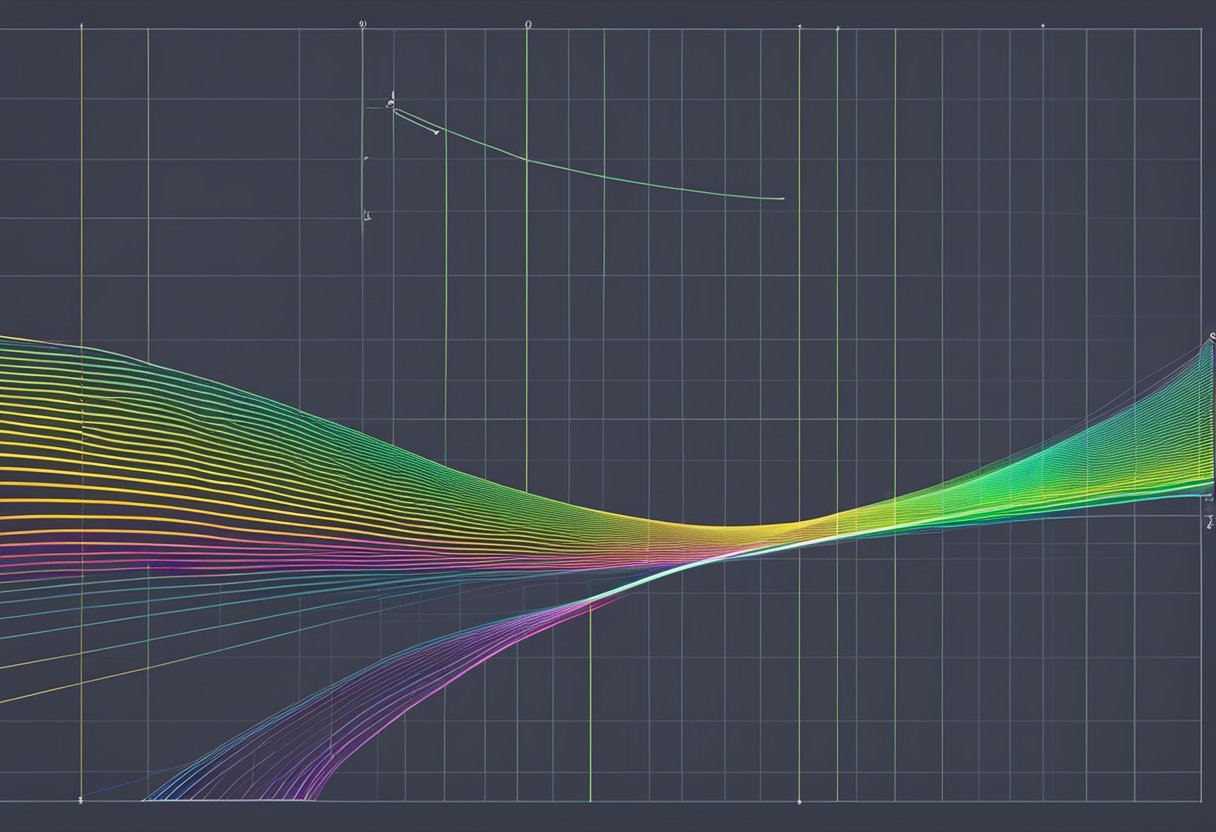

This function is pivotal in deep learning, notably due to its S-shaped curve, which elegantly squashes input values to a range between 0 and 1, resembling a probability distribution.

To obtain the derivative of the sigmoid function, I apply calculus tools such as the chain rule. The derivative, which represents the slope of the function at any point, reveals the rate of change of the function’s output with respect to its input. The derivative is calculated as follows:

$$ \frac{d \sigma(x)}{dx} = \sigma(x) \cdot (1 – \sigma(x)) $$

This result showcases the beauty of the sigmoid function; the derivative can be expressed in terms of the function itself. This particular property is extremely beneficial when computing gradients during the backpropagation in neural networks.

Application in Machine Learning

The derivative of the sigmoid function is a cornerstone when working with artificial neural networks. During the training phase, specifically in gradient descent and backpropagation, the derivative aids in adjusting weights to minimize the loss function, essentially learning from the data.

In the context of deep learning, this derivative helps inform how much the weights should change to achieve better performance. Adjusting these weights is how a model learns to distinguish between different data points and create a decision boundary.

However, it’s worth noting that the sigmoid function and its derivative carry the risk of the vanishing gradient problem, where gradients tend to get smaller as the network goes deeper, potentially slowing down learning or causing it to plateau prematurely.

Alternatives such as ReLU and other non-linear activation functions have been introduced to mitigate this issue.

By grasping the relationship between the sigmoid function and its derivative, I gain deeper insights into the internal workings of machine learning algorithms and the learning process itself.

Conclusion

In this discussion, I’ve covered the importance of the derivative of the sigmoid function in various machine learning applications.

The key takeaway is that the sigmoid function is crucial due to its continuous nature and differentiability, which allows for effective backpropagation in neural networks.

The derivative itself, given by the expression $\sigma'(x) = \sigma(x)(1 – \sigma(x))$, highlights the rate of change of the sigmoid function at any point, which is essential for gradient-based optimization algorithms.

I’ve emphasized how gradients facilitate the adjustment of weights in a neural network, leading to successful learning.

It’s clear from the simplicity of its derivative that the sigmoid function is computationally convenient, despite some drawbacks like vanishing gradients. As such, it remains a staple in the toolbox of machine learning techniques, even as the field continues to evolve and alternative activation functions like the ReLU function become more prominent.

By understanding this derivative, we gain deeper insight into how neural networks learn and what makes the sigmoid function particularly suited for binary classification problems.

I hope that this knowledge empowers your future endeavors in AI and machine learning, whether you’re implementing basic models or diving into more complex architectures.